North Korean strike plan for mainland U.S. revealed and EMP risks evaluated

Above: a peak of 21,244 hits per month occurred on this blog in December 2012, when North Korea successfully launched a 3-stage missile carrying a satellite, Unha-3, into orbit. Few hits on this blog from North Korea, but interest from Russia. Judging by the size of the vast USSR civil defense system and early USSR EMP space bursts on 22 October and 1 November 1962, during the Cold War, there are no real "secrets" here for Russians to find. They have the data already.

North Korean leader Kim Jong Un photographed on 29 March 2013 in front of a large map labelled “U.S. Mainland Strike Plan,” with missile trajectories plotted from North Korea to four American state targets: Hawaii (Pacific), San Diego (California), Washington D.C., and Austin (Texas). The question is, are these intended EMP target points (high altitude nuclear bursts)?

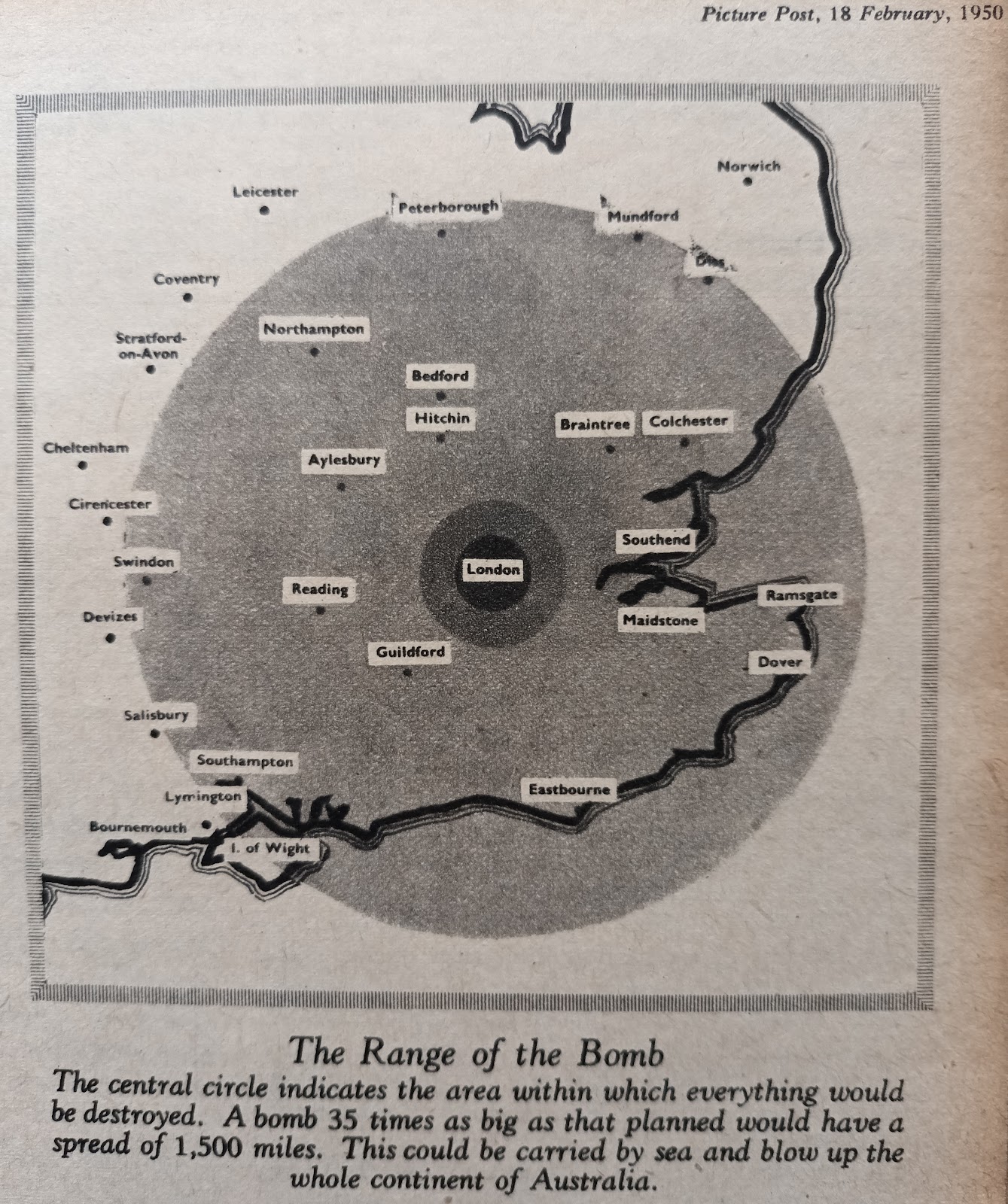

North Korea has tested nuclear weapons (0.48 kiloton on 9 Oct 2006, 2.35 kilotons on 25 May 2009, and 7.7 kilotons on 12 Feb 2013) and missiles, most recently placing a satellite in orbit on 12 Dec 2012 using a 3-stage rocket. This indicates that North Korea could deliver nuclear warheads exceeding 7 kilotons yield to detonate 75 km over several major American cities, producing E1 (prompt gamma ray) EMP damage that could cripple the USA. As the graphs and maps below show, even major inaccuracies in detonation location and altitude would have comparatively little effect on the devastating EMP results.

Above: E1 (prompt gamma ray) EMP field strengths for a 0.3 gauss magnetic field at the equator. Over central USA, the EMP strengths are doubled because the magnetic field is twice as strong, about 0.6 gauss. Notice that owing to the conduction current, the EMP increases only slowly with bomb yield. (Note also that the EMP that crippled 30 strings of streetlamps in Hawaii from the nuclear test 1,300 km away on Johnston Island on 9 July 1962 was only 5.6 kV/m in strength as Dr Longmire reveals in EMP interaction note 353, in the era before EMP-sensitive modern electronics, thus all of the damage in 1962 was caused to large relatively insensitive overload fuses, not microprocessors or computers and power supplies.)

If the North Korean bombs have a thin beryllium tamper and minimal thickness of high explosive around the core, quite a high fraction of prompt gamma rays will be released (3.5% of the energy of fission is in the form of highly penetrating ~2 MeV prompt gamma rays, many of which obviously can escape from small, low yield bombs with relatively little shielding around their core). The distribution of the EMP over America is plotted in graphs below, taken from the recent 2010 report for the US EMP Commission, Meta-R-320:

Herman Kahn surveyed the wide spectrum of coercive uses for nuclear weapons tests - from underwater to high altitude (EMP effects) - in his 1965 book, On Escalation, pages 214-5 (linked here):

'Consider ... the use of nuclear weapons to coerce an opponent by means of a spectacular show of force. In this case, it is clear that there is an almost continuous spectrum of alternatives available. They can be ranked as follows:

'1. Testing a large weapon for purely technical reasons almost as part of a normal test programme.

'2. Testing a very large weapon, or testing on a day that has particular political significance, or both.

'3. Testing a weapon off the coast of the antagonist so that the populace can observe it.

'4. Testing a weapon high in outer space near the antagonist's airspace [EMP].

'5. Testing lower in outer space, or directly over the opponent's country [EMP].

'6. Testing so low that the shock wave is heard by everybody, and perhaps a few windows are broken.'

Above: America is preparing for urban nuclear detonations due to nuclear proliferation, which itself stems from the attraction to dictatorships of exaggerated urban nuclear weapons effects hype from the cold war era. In the Cold War, exaggerations aided nuclear deterrence of the tremendous conventional forces of the Warsaw Pact, which was relatively cheaply (compared to conscripting half the population into a conventional army). For example, houses were built with a clear radial line-of-sight to the fireball in the unobstructed Nevada desert in 1953 Operation Upshot Knothole test Encore which proved that if we knock all the houses down in a city to prevent any shadowing effects, thermal radiation still cannot ignite a whitewashed wooden house, but will ignite one packed with inflammables at the (very dry) 19% relative humidity of that test. The same stunt was repeated in 1955 at Operation Teapot, shot Apple 2, where again thermal, blast and nuclear radiation effects were exaggerated by failing to simulate the shadowing and shielding of a modern city. In Hiroshima, the secret USSBS report 92 volume 2 showed that there was an enormous difference in mean areas of effectiveness for destruction of modern city buildings and the predominant wooden houses which are not found in modern city centres, which would be the targets for nuclear attack:

Glasstone and Dolan write in the 1977 edition of The Effects of Nuclear Weapons, pages 611-612 (paragraphs 12.209-12.211):

"From the earlier studies of radiation-induced mutations, made with fruitflies, ... The mutation frequency appeared to be independent of the rate at which the radiation dose was received. ... More recent experiments with mice, however, have shown that these conclusions must be revised, at least for mammals. ... in male mice ... For exposure rates from 90 down to 0.8 roentgen per minute ... the mutation frequency per roentgen decreases as the exposure rate is decreased. ... in female mice ... The radiation-induced mutation frequency per roentgen decreases continuously with the exposure rate from 90 roentgens per minute downward. At an exposure rate of 0.009 roentgen per minute [0.54 roentgen/hour], the total mutation frequency in female mice is indistinguishable from the spontaneous frequency. There thus seems to be an exposure-rate threshold below which radiation-induced mutations are absent or negligible, no matter how large the total (accumulated) exposure to the female gonads, at least up to 400 roentgens."

Above: land equivalent 48 hour downwind fallout doses from even dirty H-bombs would be survivable in a modern city concrete building with a protection factor of 10 or more, and people could evacuate from the path of the fallout, which is even more predictable with modern weather computers today than in the 1950s.

"Outrageous, unsubstantiated statements are made concerning the hazards of ionizing radiation, in spite of a vast published, peer-reviewed literature … The result of this deception is not insignificant: literally millions of lives are less healthy … annual billions of dollars spent needlessly to protect us from radiation that we need for optimal health. Radiophobia limits the political will of people and governments … Radiophobia prevents the logical and safe burial of nuclear wastes. Radiophobia causes serious psychological effects leading to loss of life (>100,000 abortions and >1,000 suicides attributed to Chernobyl fallout). My career was initially funded by the AEC, starting at the Radiobiology Laboratory at Texas A & M … All graduate students in the lab participated in a large reproduction study with rats who received continuous gamma irradiation … I can remember their discussions about why rats receiving 20 mSv/day [2 R/day] (~ 7 Sv per year) lived longer … I spent about 25 years (1968-93) working on inhalation toxicology of transuranics [plutonium, etc.] in the Biology department at PNL … A large practical threshold of 2-10 Gy [2,000-10,000 R, for low dose rates of ~10 mR/hour] is seen in humans for thorotrast patients (liver cancer) and radium dial painters (bone cancer)."

Above: In unstressed cells, the level of p53 DNA repair protein is minimised via its binding to protein MDM2, which is the oncogene that normally inhibits p53. (Second illustration from http://p53.free.fr/p53_info/p53_dev.html. See also Lawrence Donehower et al., "Mice deficient for p53 are developmentally normal but susceptible to spontaneous tumours," Nature 356: 215-221.)

MDM2 levels increase with p53, causing a negative-feedback "regulation loop" thereby keeping p53 at a low level in normal cells. Low level radiation exposure increases tumor-suppressor levels, reducing cancer:

Radiation causes stress mediators (like ATM and CHK2) to "activate" p53 by separating the protein p53 from its inhibitor, MDM2. This increases the level of active p53, not bound to MDM2, for 12 hours after irradiation. Thus, low level radiation causes an increase in tumor-suppressing p53, reducing the cancer and genetic risks by repairing DNA damage that would otherwise lead to cancer or genetic defects in offspring.

Direct measurements reported by Jamie Lamkin in his book, Investigating the Role of p53 in the Germ Cell Apoptotic Pathway (Rhode Island College, 2011, Chapter 2, "Effect of Radiation Exposure on p53 in Mouse Germ Cells," Figure 9), determined that the p53 level in cells showed a 5-fold increase in tumor-suppressing p53 levels, peaking at 6 hours after exposure of mice to 5 Gy of Cs-137 gamma radiation given over a 44 minutes exposure time. This proves the radiation stimulated repair potential for DNA damage, but at high dose rates, some DNA damage can occur before p53 levels rise enough to suppress damage.

The mechanism for DNA repair and cancer avoidance by p53 is as follows (note that other tumour suppressor genes also exist, e.g. PTEN regulates the growth rate of cells, but p53 is central):

1. Once activated by radiation, p53 arrests the cell cycle at the G1/S regulation point by activating the expression of a transducer gene like the kinase inhibitor p21 (which stops the cell division cycle by binding to CDK2), or the Growth Arrest and DNA Damage "GADD45" gene, and then – while the cell cycle is stopped – it repairs the DNA damage using a DNA repair enzyme like p53 R2, GADD45, p48 or XPC.

2. If the damage is beyond safe repair, p53 uses genes like DR5, Plg3, AIP1, Noxa, Bax-2, Puma or Fas to produce proteins that kill the cell by "apoptosis" (programmed cell death), to prevent it from turning carcinogenic. P53 is so effective at preventing cancer that in most cancers (over 50% of human tumours including lung, colon, breast, cervical and bladder cancer) only arise when defective mutations of the p53 gene (TP53) occur because the defective p53 cannot activate p21 to stop a cell’s division for repair work (reference: M. Hollstein, et al., "p53 mutations in human cancers," Science, v253, 1991, pp.49-53).

Proof of the importance of p53 is shown by the fact that most of those who inherit a mutated p53 gene suffer from childhood cancers (Li-Fraumeni syndrome). Similar early vulnerability to cancer was also observed in p53-deficient mice (Reference: Lawrence A. Donehower, et al., "Mice deficient for p53 are developmentally normal but susceptible to spontaneous tumours," Nature, v356, 1992, pp. 215-21).

Toshiyuki Norimura, et al., "p53-dependent apoptosis suppresses radiation-induced teratogenesis [birth defects]," Nature Med. 1996 May;2(5):577-80: "This reciprocal relationship of radiosensitivity to anomalies and to embryonic or fetal lethality supports the notion that embryonic or fetal tissues have a p53-dependent "guardian" of the tissue that aborts cells bearing radiation-induced teratogenic [birth defect-causing] DNA damage."

It should be noted that p53 suppresses all kinds of DNA damage, both that leading to cancer and that in germ cell DNA that leads to genetic defects in offspring. p53 cancer-suppression is stimulated by radiation, which releases p53 from its MDM2 inhibitor. These are hard scientific facts which explain the scientific evidence of lifespain increase in controlled mice irradiation experiments, and the bone cancer threshold dose rate evidence from human radium dial painters whose bones were measured to accurately determine their radium content. Cancer occurs when low levels of p53 repair multiple double-strand breaks (entire chromosome breaks) too slowly, allowing mistakes to occur, mainly due to the loss of sections of DNA which contain tumor suppressor genes or cell cycle regulation genes (p53 or PTEN). The cell which no longer has these vital genes then starts undergoing uncontrolled proliferation without adequate DNA repair mechanisms in place. The second stage is that the defective cells undergo evolution as more and more errors accumulate in their DNA. This accounts for the delay time of years that it usually takes for a cancer evolve aggressive characteristics, like a special network of blood supply vessels and extra insulin and insulin growth factor (IGF) receptors, both of which speed up the rate of growth of the cancer. These facts are relevant to the long term risks from exposure to high dose-rate, high dose radiation, because someone who knows they have been exposed can take precautions to reduce the risk of the secondary stage of evolution of aggressive cancers. Minimal intake of simple sugars and regular exercise (to keep blood insulin levels low), help to reduce the risk of aggressive tumors evolving in this second stage.

"Chromosome breaks occur at all dose rates, but the … dose rate dependence … depends on the presence of two breaks in close proximity at the same time. The probability of this occurring will be greater at high dose rates than at low, since the breaks are assumed to stay open for only a few minutes [before repair by a DNA repair enzyme, like P53]. Unless another break is produced close by within this period, the first will reconstitute and no injury will be seen. … Austrian miners from Schneeberg and Joachimsthal [inhaled radon gas resulting in lung cancer after an average period of] about seventeen years, and during this time the lungs of the miners will have received a dose of at least 1,000 rads and possibly more."

- Dr Peter Alexander, Atomic Radiation and Life, Penguin Books, 1957, pages 59, 148-9.

"A marked contrast in the production of persistent chromosone aberrations in mouse cells by ionizing radiation delivered at a high dose rate (30 rad/min) versus a low dose rate (1.45 rad/hour) was observed. ... none of the 17 mice exposed to the continuous low dose rate gamma radiation (1.45 rad/hour) showed definite clones of abnormal marrow cells ... If some of the late effects of radiation, particularly leukemia incidence, are related to the frequency of chromosome aberrations, it is possible that low dose rate gamma radiation may be less leukemogenic than high dose rate radiation."

- P. C. Nowell and L. J. Cole, Reduced incidence of persistent chromosome aberrations in mice irradiated at low dose rate, USNRDL-TR-644, 6 May 1963. (Note that William Russell at Oak Ridge National Laboratory later determined a dose rate threshold of 0.54 rad/hour for net genetic damage in female mice, using 7 million mice in the "megamouse project" discussed in Glasstone and Dolan's 1977 edition of The Effects of Nuclear Weapons (see http://arxiv.org/abs/1205.3261 for a summary of the scientific data obtained). It turns out that at dose rates below 0.54 rad/hour, p53 is able to repair DNA damage to the eggs of female mice. This all remains an "immoral heresy" to the dogmatic Pauling myth alleging "no safe threshold" for radiation, so it is censored-out by the media!)

Cancer arises from incorrectly repaired, multiple uncorrected double-strand breaks at high dose rates, or in situations where DNA repair enzymes are dysfunctional due to loss, mutation, or breakdown of the activator mechanism which releases DNA repair proteins from binding their normal inhibitors in healthy cells, when radiation stress occurs. At normal mammal cell temperatures, the Brownian motion of water molecules is sufficient to induce single-strand DNA breaks (only one strand in the double helix of DNA being broken) at a rate of 2 per cell per second. These single-strand breaks are easily repaired without shutting down the cell cycle, because the double-helix ensures that the other strand of DNA continues to hold the molecule together, preventing any risk of the transposition of sections of DNA within genes along chromosomes. Since base-pairs are always "matched up" between one strand and the other in the double-helix of DNA, with the base in one strand being paired with a base in the other strand, correct repair of single strand breaks is easy. A missing base in a single strand break is simply replaced with a base that pairs with the remaining DNA base in the unbroken strand.

Double strand breaks, in which both strands in the double helix are severed

In addition, natural double-strand breaks in which both strands in the double-helix of a DNA molecule are broken occur at the natural rate of 0.5 per cell per hour (i.e., 0.007% of all natural DNA breaks). Unlike single-strand breaks, these double-strand breaks completely sever the chromosome at the break point, since DNA consists of two strands of DNA in the double-helix form. If two double-strand breaks occur in rapid succession within a chromosome, a free section of DNA is completely unleashed, which in the fluid environment of the cell may rotate or even be lost before the loose ends are reconnected by a DNA repair enzyme. If the wrong ends of severed DNA segments are connected during the repair process, a cancer may occur, depending on which genes have been transposed or lost by the error.

Cancer growth is accelerated by high blood insulin and insulin-like growth factors like IGF-1, since cancer cells typically have more insulin and insulin factor receptors than healthy cells. Aggressive cancers proliferate by rapid cellular division, so they have a higher metabolism than healthy cells. Blood glucose levels control insulin levels. While all healthy cells require glucose that is obtained from food of all types, simple sugars are broken down into glucose more rapidly than complex sugars in the form of starch. Simple sugar ingestion may preferentially fuel cancer proliferation by delivering 20 calories/minute to the blood stream, compared to just 2 calories/minute for complex sugar breakdown from foods like potato starch. Obtaining glucose through the slow breakdown of complex sugars in starch or in fat minimises the blood glucose level and therefore minimises the insulin level, which limits the rate of proliferation of cancer. Alarmingly, these facts have been obfuscated and dismissed by oversimplifications that merely claim that "all cells need glucose." The research literature indicates that low glucose and low insulin can reduce cancer cells to a fasting condition with slow proliferation. Another area of research needed is "insulin potentiation therapy" where a combination of reduced blood glucose with excess insulin and insulin-like growth factors has been suggested to starve aggressive fast-proliferating cancer cell that fail to respond to other treatment. To starve cancer, insulin is used to increase cancer metabolism while simultaneously depriving cancer of sufficient glucose (fuel). Cancer cells can have up to 10 times more IGF receptors than non-cancer cells, and so suffer greater effects from a variation in insulin than healthy cells, which survive fasting. It is easy to measure blood sugar levels during this treatment to prevent brain damage, but very few controlled experiments have even been undertaken to discover how to optimise such radical ideas, due it seems to political and financial inertia of traditional drugs industry research which seeks only new chemicals. It you want to beat cancer, you first must kill the demented hostility/apathy from the intellectual dictatorship of basically fascist capitalists who wear the cloaks of moralistic socialists and preach subjective radiation dogmas as a modern substitute for witchcraft superstition and taboos.

"Today we have a population of 2,383 [radium dial painter] cases for whom we have reliable body content measurements. . . . All 64 bone sarcoma [cancer] cases occurred in the 264 cases with more than 10 Gy [1,000 rads], while no sarcomas appeared in the 2,119 radium cases with less than 10 Gy."

- Dr Robert Rowland, Director of the Center for Human Radiobiology, Bone Sarcoma in Humans Induced by Radium: A Threshold Response?, Proceedings of the 27th Annual Meeting, European Society for Radiation Biology, Radioprotection colloquies, Vol. 32CI (1997), pp. 331-8.

This extensive and accurate dose-effects data debunks the claims of Linus Pauling in the 1950s that fallout strontium-90 caused bone cancer during nuclear testing. See graph above relating radium to plutonium and strontium, which all have safe doses with thresholds for cancer which get larger for lower dose rates, e.g. see graph below for bone cancer threshold dose data in Hiroshima and Nagasaki (very high dose rates):

Linus Pauling versus radiation facts

"Small doses of any drug possess a bio-positive effect while the large dose of the same compound has the opposite bio-negative effect. In short, all drugs have opposite effects in two dose extremes. The young scientist that worked on this hypothesis for his PhD under the famous Stanford immunologist, George Fegan, to show that vitamin C could be dangerous in bigger doses while it is a stimulant and good for the health in very small doses never made it and had to leave science research altogether because Linus Pauling, the great hero of science, destroyed the young scientist completely. It was Pauling himself that had induced Fegan to work on the good effects of vitamin C on the immune system in the first place. The hormetic effect of vitamin C could not be swallowed by Pauling. Pauling could never agree with vitamin C being poisonous in larger doses! Later he fought an expensive legal battle against his own colleague, a former Director of the Pauling Institute, for showing that cancer growth is stimulated by vitamin C in larger doses, but Pauling lost the battle and was disgraced. Pauling got the second Nobel (Peace) Prize, after his first for the discovery of vitamin C, by the same antipathy towards hormesis. Edward Teller was the father of American Nuclear deterrent against the communists. While Teller was testing atomic weapons, he showed the hormetic effect of radiation by accident. In very small doses radiation stimulates the immune system and increases human life span, radiation hormesis. It also slows the ageing processes by the hormetic effect of working as an anti-oxidant. … The famous Teller-Pauling debates that followed took the whole of America by surprise. Pauling succeeded in demonizing Teller to the extent that the Swedish Nobel Academy gave Pauling the Nobel Peace Prize, his second Nobel! There are many such frauds that have occurred in science … However the technology industry has become a big money spinner and that feeds society with the myth about science."

- Professor B. M. Hegde, MD, FRCP(Lond.), FRCP(Edin.), FRCP(Glas.), FRCP(Dub.), FACC(U.S.A.), FAMS, "Hormesis", http://www.bmhegde.com/hormesis.htm

THE EXAGGERATION OF URBAN NUCLEAR WEAPONS EFFECTS

The nuclear weapons effects urban targets exaggeration policy back fires when human-rights-violating dictatorships make nuclear weapons to use the exaggerated threats against democracies.

But hopefully, the North Koreans are just interested in Project Orion, the nuclear weapon powered spacecraft developed by Freeman Dyson, as the only practical way to safely and cheaply put a large colony on Mars. It would travel directly (in a straight line!) and quickly to Mars using 2,000 nuclear bombs, carrying 150 people and attaining a top speed of 45 km/second. The travel time would be 3 months for the minimum distance to Mars of 56 million km and 6 months for the maximum Mars-Earth distance of 101 million km. In 1959 the stability of the entire system was completely proved in a scaled-down demonstration test which impressed Dr von Braun. Declassified nuclear test tower steel ablation studies, referred to vaguely by Dyson in the BBC documentary above, are given in weapon test reports WT-1134 and page 59 of WT-1488, both quoted and discussed here. Photo of the 29 kt Apple-2 tower legs blown down here, see discussion here and additional photos here. (General information on Project Orion is linked here.)

It's obvious that the photo is a publicity gimmick intended to bolster North Korean military prestige, otherwise they would have kept their attack targetting plans a closely guarded secret. On the other hand, just because North Korea is issuing threats of strikes, there is no certainty that the situation will not develop: remember Herman Kahn's analysis of the Munich Conference of September 1938. Kahn pointed out in his testimony to the June 1959 Congressional Hearings before the Joint Committee on Atomic Energy, The Biological and Environmental Effects of Nuclear War, on page 904:

"I have a book with me today which I recommend to those who want to exaggerate the impact of thermonuclear war. It is called 'Munich: Prologue to Tragedy,' by Wheeler Bennett. ... As far as we can tell, Hitler was not bluffing [at the September 1938 Munich Conference where Chamberlain and Daladier gave in to Hitler's intimidation, handing over the Sudetenland, in exchange for a worthless peace promise]. The men who were in the room with him could see he was not bluffing. It was easy for the people back home to say he was bluffing, but not for the men who had the decision to make. The German people did not want war. The German Army did not want war. They literally threatened to have a military revolution [this premature argument with Hitler tragically discredited their opinion when Hitler succeeded, due to Chamberlain's softness]. But Hitler seems to have been willing to have a war if he couldn't have his way."

The posturing with published photos of missile trajectory strike plans is very much in the spirit of the Munich Conference, or for that matter the Pearl Harbor military threat evaluations done prior to 7 Dec 1941 (which Kahn discusses in his book "On Thermonuclear War"). In these cases, there were obvious crises existing but various expert authorities found reasons which seemed good to ignore the threat or downplay it; basically these reasons were soothing "no-go theorems" based on very shaky assumptions, of the type often historically used to try to prevent radical ideas upsetting status quo in mainstream physics (like the solar system, quantum theory or relativity). Kahn explains that it was impossible to believe that Japan would attack Pearl Harbor in 1941, according to the best experts, because (1) Japan couldn't hope to win a war with the USA, and (2) torpedoes need over 75 feet of water and Pearl Harbor is largely only 30 feet deep. In addition, anyone pointing out a threat in public was deemed a scare-mongerer or war-mongerer. If everyone just ignored the threat, it would cease to exist, popular consensus decided.

The combination of political and technological "no-go theorems" in this analysis made the idea of a surprise attack on Pearl taboo, and anybody who wanted to discuss the matter was dismissed as a time-waster. Japan saw the situation very differently, using different political and technological thinking. For example, Japan developed special torpedoes that only need 30 feet water depth, unlike the 75 feet believed by American experts. Similarly, as Dr Irving Janis explains in "Victims of Groupthink", Kennedy's most brilliant top experts failed to foresee that the USSR would put nuclear IRBMs in Cuba. The point is, as Professor Feynman explained, averaging the best guesstimates of the experts does not put you in possession of hard facts, it's merely a consensus of fashionable speculation, just a form of Cargo Cult pseudoscience. You cannot always rely on reason to predict what enemies will do.

If your enemies were totally reasonable, they simply wouldn't be enemies of free democracy in the first place. There has been speculation that North Korea can't place a nuclear warhead on top of a missile (despite having tested nuclear explosives successfully, and having put a satellite into orbit). This guess is not hard intelligence! As with Munich in September 1938 or Pearl Harbor in December 1941, the credibility of coercion is down to the dictator's fanaticism and desperation, precisely the things that would be laughed at in a democracy. Hitler in 1938 had a financial crisis due to ending unemployment by conscripting a massive National Socialist army (as explained in the previous post on this blog).

Germany was spending far more each year on arms than Britain, which was therefore slipping back and losing ground, not "buying time to rearm" with the policy appeasement, as Chamberlain's apologists claimed, but at the same time, Germany couldn't afford what it was spending. The arms race would have come to an end through dictatorial failure (just as Reagan's capitalist arms race challenge threatened to bankrupt the USSR in the 1980s), and Germany simply could not afford to support the financial strain of the arms race indefinitely without having a war (or "peaceful" invasions). The only way to avert economic collapse was by war, both in the hope of winning through superior technology and skill, and to deflect attention from internal matters by utilizing the massive army, navy and air force.

Similarly, Japan in 1941 didn't use simple calculations to determine whether it was likely to win a war against America. Instead, it relied on its own determination and fierceness to overcome the odds, plus a calculation that the chances of success - such as they were - would get smaller as time went on and the relative strengths of the two nations changed. The major implication of this fact is that history shows that what appears to be common sense and reasonable from the perspective of planners in a democracy, may not be applicable to the situation inside a bankrupt and desperate dictatorship, which has starved its people to make nuclear weapons and generally prepare for a war. This is similar to the situation in 1951, when the Korean War was raging, Russia was building nuclear weapons, and Prime Minister Attlee and later in 1952 Winston Churchill were informed by military intelligence that the impoverished USSR dictatorship was making small numbers of nuclear weapons and might try a surprise attack using a smuggled nuclear weapon, either hidden in cargo containers in an innocent neutral ship and timed to explode when it entered a British city port, or else smuggled in small radiation-shielded parts using drug-smuggling technology, and assembled by secret agents (a major Cold War civil defence threat that continued from 1951 to the end of the USSR, as described by Frederick Forsyth in his August 1984 novel The Fourth Protocol, later made into a 1987 film; a major nuclear attack was always actually less likely than this type of subversive attack using a false flag or no flag at all, because of the deterrence from having a protected second strike retaliation capability):

Above: nuclear weapons testing was used in part as a tour de force, or show of strength, during the Cold War (such as this spectacular U.S. Air Force colour photo of the 25 July 1946 Crossroads-Baker 23 kt test at 90 feet underwater in Bikini Lagoon, with the water column and Wilson condensation cloud dwarfing the target array of discarded WWII battleships).

The fallout data measured by George R. Stanbury and others from the British nuclear test in a simulated terrorist ship attack, Operation Hurricane, is linked here, and it was used in 1954 by Stanbury to assess radiation hazards in the restricted U.K. Home Office civil defence report, "Assumed Effects of Two Atomic Bomb Explosions in Shallow Water Off the Port of Liverpool," CD/SA51, U.K. National Archives report HO 225/51. Stanbury used a contamination arrival time of 5 minutes, assumed that the population outdoors would take 2 minutes to move indoors, and used an average protection factor of 100 for buildings to allow for radioactive rain to run off roofs and into underground drains or soak into the soil. He concluded that two 20-kt bombs detonated in cargo freighters 180-m off shore at lunchtime when 20% of the population is outdoors, on lunch breaks, would kill 78,600 by blast and by the radiation if the wind was blowing inland at 10 miles/hour.

The initial radiation is reduced for the Hurricane bomb-in-ship burst (with the bomb centre located 2.7 m below the waterline), and only 1.4% of the 25 kt total yield (or 1.8% if a total yield of 20 kt is assumed) was radiated as thermal radiation because the water cone thrown up rapidly quenched the fireball. The main problem is the rapid arrival time of the fallout from such a low yield near surface burst, which leads to incredibly high radiation doses in a small "hotspot" area directly downwind. (For the 15 megaton Castle-Bravo test, the maximum measured fallout doses in the hotspot areas were actually far lower as explained in weapon test report WT-915, because of the decay which occurred during the longer arrival time due to the higher mushroom cloud. Measurements from automatic recorders showed that fallout from Castle-Bravo began to arrive under the mushroom at a mean time of 28 minutes after burst and the dose rate only peaked at 65 minutes after detonation, so people in a real city would have had a relatively long time to organise and evacuate from the hotspot area directly downwind.) The shorter arrival time from the lower clouds of lower yield detonations gives less time for evasive actions like evacuation and sheltering. However, the smaller downwind hotspot area of intense "stem" fallout from a low-yield kiloton-range detonation allows its evacuation, since people will be able to literally run cross-wind to get out of the area, without receiving a lethal dose, if people are fully informed about the details.

THERMAL SHADOWING BY WESTERN CITY BUILDINGS (MODERN CITY SKYLINES):

EXAGGERATIONS OF THE FIRESTORM AND FLASH BURNS EFFECTS USING INAPPROPRIATE WOOD-FRAME HOUSE IGNITION DATA FROM HIROSHIMA

The firestorm in Hiroshima (8:15 am, 6 August 1945 nuclear attack) was due to severe overcrowding of wooden buildings containing coarcoal braziers used for breakfast, as proved by a survey of over 1,000 survivors from concrete buildings (who survived the firestorm), reported in the secret 1947 US Strategic Bombing Survey report on the firestorm, volume 2 of report 92, The effects of the atomic bomb on Hiroshima, Japan (which has nothing whatsoever to do with the 1946 US Strategic Bombing Survey report, which omits every single piece of data and just gives emotional propaganda to bolster deterrence):

Firestorm and nuclear winter (firestorm dust loading of stratosphere) liars debunked by hard Hiroshima evidence!

The effects of a surface burst nuclear explosion in any modern hi-rise concrete and steel frame city are grossed reduced, compared to the numbers frequently cited using Glasstone and Dolan's 1977 "Effects of Nuclear Weapons". This should be publicised, to discourage potential aggressors from even thinking about trying such an attack. In addition, this new urban effects research should be extended to determine precisely the extent to which the effects of higher yield surface bursts and air bursts would be attenuated in modern cities. If this is done, the attractiveness of city targetting to potential aggressors would be reduced, and cheap duck and cover and fallout sheltering civil defense, with power system hardening against both EMP attacks and solar storms, would then appear a more credible and cost-effective option, taken more seriously than today. Nuclear targetting credibility would then be constrained to military targets, reducing the hysterial fears and political instability that results from nuclear proliferation. The original aim of nuclear deterrence was to deter military aggression, not to hold civilian targets hostage (counterforce, not countervalue).

Discrediting over-hyped exaggerations of urban nuclear effects and propaganda using unobstructed desert tests on isolated houses is a vital step towards peace and against encouraging nuclear proliferation in every tin-pot dictatorship of the world.

The deceivers who exaggerate undermine the credibility of simple civil defense countermeasures, and simultaneously gives reassurance to dictators that making a bomb will make their dictatorship secure. We have gone into this in detail in previous posts, using the example of gas warfare exaggerations upon the September 1938 Munich Conference and earlier British policy concerning appeasement, pacifism and disarmament. If you lie to yourself and the public - either deliberately or due to careless calculations and secrecy of civil defense research on weapons effects in urban environments - then all your policies are going to be derived from inaccurate scientific data!

It was George R. Stanbury (brief extract above) who first disproved the possibility of urban firestorms and thus firestorm-injected stratospheric soot "nuclear winter" using a simple calculation of the thermal radiation flash "shadowing" of modern city buildings: in a nutshell, only the uppermost floors of a few percent of the buildings in London or any other modern city can "see" the surface burst fireball if the yield is below a megaton, and WWII firebombing experience proved that you need to set alight 50% of the buildings to cause a firestorm, so it is impossible for a nuclear burst amid skyscapers to cause a firestorm! Stanbury also refers to two 1950s studies of firestorm impossibility in British cities (Birmingham and Liverpool) where the fire departments used details maps and made scale models of the cities, then found that even for an air burst like Hiroshima, in modern Western cities the average height of buildings prevents enough ignition to cause a firestorm! (reference: U.K. National Archives document HO 225/121, George R. Stanbury, "Ignition and fire spread in urban areas following a nuclear attack", September 1964, relevant extracts included in my compilation of British civil defense nuclear testing reports linked here). QED.

Hence, no firestorms in modern cities, confirming page 350, paragraph 7.76 in the 1964 edition of Glasstone's "Effects of Nuclear Weapons":

"Based on these criteria, only certain sections - usually the older and slum areas - of a very few cities in the United States would be susceptible to fire storm development." (Extract linked here.)

Now what about fireball rise? As the "Trinity" near surface burst nuclear test proved in 1945, as well as the "Sugar" surface burst in 1951 and Britain's surface burst Buffalo-2 in 1956, there is no fireball rise involved here, because the fireball ceases to radiate any significant thermal radiation long before buoyancy sets in. Buoyancy isn't caused by a law of Archimedes (which only applies to bodies in a fluid where there is fluid pressure pushing upwards from below). A nuclear surface burst fireball doesn't rise until the partial vacuum at ground zero has been filled by the afterwinds, which causes an appreciable "hover time", during which there is very little upward motion.

Fireballs and hot air balloons rise because the pressure pushing upwards on their base is greater than the pressure pushing downward on their top. The fireball "sticks" to the ground initially because there is no significant upward pressure on its base. So only when the afterwinds return air to the vicinity of ground zero, does buoyancy and fireball rise commence. The photo below demonstrates that even at 9 seconds after the 1945 Trinity near surface burst test (22 kt on 30 m tower), very little fireball rise had occurred (contrasted to higher air bursts, which were already fast-rising toroidal vortices by this time):

The thermal radiation was long since over, the glow of the fireball is only just visible, since it had ceased to radiate appreciably within 3 seconds of burst (the pre-dawn test was in total darkness, and was self-illuminating). The slow fireball rise in surface bursts is such that it can generally be ignored when evaluating the shadowing effects of buildings on thermal burns and ignitions. However, a computerized study of city shadowing on fires and burns including fireball rise had been done: UCRL-TR-231593. Thermal Radiation from Nuclear Detonations in Urban. Environments (R. E. Marrs, W. C. Moss, B. Whitlock), June 7, 2007, which finds on page 11 that Glasstone's "Effects of Nuclear Weapons" grossly exaggerates the thermal fires and burns from nuclear explosions in cities (all "evidence" from nuclear tests in the Nevada desert is fakery, since there were no skyscrapers in the Nevada desert around the fireball, or in Hiroshima and Nagasaki, where 1-2 story wood-frame buildings predominated):

"Even without shadowing, the location of most of the urban population within buildings causes a substantial reduction in casualties compared to the unshielded estimates. Other investigators have estimated that the reduction in burn injuries may be greater than 90% due to shadowing and the indoor location of most of the population. We have shown that common estimates of weapon effects that calculate a “radius” for thermal radiation are clearly misleading for surface bursts in urban environments. In many cases only a few unshadowed vertical surfaces, a small fraction of the area within a thermal damage radius, receive the expected heat flux." (Emphasis added.)

Since most of the Compton-scattered gamma rays (Compton scattering predominates) are scattered in the forward direction, the fission product component of the initial gamma ray dose is also substantially shielded by tall city buildings in the radial line between the fireball and observer. The secondary gamma rays from neutron capture by nitrogen and also from inelastic scattering of neutrons are emitted in random directions (isotropically from the point of emission), but since most neutrons are captured and scattered quite close to ground zero (within a few mean free paths), most of those "air secondary" gamma rays still originate from the vicinity of the fireball. Neutrons are scattered over a wider range of angles than most gamma rays, so there is more "skyshine" and buildings have a smaller shielding effect than for most of the initial gamma radiation, but there is still some shielding of neutrons by buildings in densely built-up hi-rise city areas. The dynamic pressure (wind) of the blast wave is also attenuated in a city, discrediting the application of the Rankine-Hugoniot equations (similarly, in a open trench, overpressure can diffract in, but the wind pressure just blows over the top without entering the trench). Every joule of energy imparted from the blast wave to a building to cause destruction by accelerating debris must be subtracted from the energy of the blast wave, or else energy is not conserved! Dr William G. Penney, 1950s Director of AWRE, explains this clearly in his 1970 paper on the yields of Hiroshima and Nagasaki, where he finds clear evidence from laboratory-quality blast sensors like bent steel flag poles on buildings and the volume of semi-crushed petrol cans, that in both cities the act of causing destruction absorbed appreciable energy from the blast wave. This debunks all of the "data" from Nevada tests on houses in unobstructed desert terrain, which does not model city attenuation!

As Glasstone's 1964 "Effects of Nuclear Weapons" graphically shows, in the ~1760 ft altitude, ~20 kt air bursts on Hiroshima and Nagasaki, the fused Mach stem only began to form at a peak overpressure of ~16 psi and reached a height of ~185 feet at a distance of around 0.87 mile from ground zero, at 3 seconds after burst. Thus, most of the close-in damage to modern buildings - mostly near ground zero in Japan - resulted from regular (not horizontally travelling Mach) reflection, where the incident blast wave was coming downwards on a slant radial line from the fireball, avoiding any blast shielding by intervening buildings. This is not the case in a surface burst, where the blast comes horizontally and does suffer close-in attenuation effects by damage caused to intervening buildings. Surface burst attenuation by modern city buildings is therefore much greater than for the air bursts over Hiroshima and Nagasaki, where regular reflection caused much damage to the relatively few modern buildings, and where the predominant building types were 1-2 story wooden homes.

EMP from Surface Bursts in Urban Environments

What's brand new and very surprising in the urban nuclear weapons effects business is a careful computer study last year (2012) by William Scott Smith and others at Los Alamos of the effects of building attenuation in a modern city on the electromagnetic pulse from an urban nuclear surface burst. A Nagasaki type nuclear weapon was assumed to explode in a van parked in an open parking lot in Houston, Texas, with sky-scrapers to its East and more-or-less open ground (low buildings) to its West. The effects of (1) building attenuation on the prompt gamma ray radiation which causes the Compton current to drive EMP and (2) building attenuation on the EMP itself (radio frequencies up to UHF) were computed. The "EMP was channeled outward along street canyons" (Los Alamos reports LA-UR-12-24078 and LA-UR-12-20227 ):

The EMP propagating Westwards over fairly unobstructed terrain was similar to that seen from Nevada and British surface bursts, showing a large EMP (peaking at 16,000 v/m vertical component at 130 metres West), but at similar distances East the EMP is trivial by comparison! This shows that for low yield surface bursts in cities, modern concrete and steel framed buildings will ensure than built-up areas "protect themselves" to an appreciable extent by mutual shielding, both by absorbing the prompt gamma rays and thus interferring with the production of the EMP in the first place, and secondly by reflecting most of the actual radio frequency EMP energy back (or absorbing it) when it is produced. So the free-field EMP data given for surface bursts in EM-1 "Capabilities of Nuclear Weapons" is a gross exaggeration when applied to modern urban targets!

Britain has declassified one early report on surface burst EMP:

J. B. Taylor, A Theory of Radioflash, U.K. Atomic Weapons Research Establishment, report AWRE-O33/59, October 1959, "Confidential" (UK National Archives document ES 4/361; related reports ES 12/458 and ES 10/1343 are still restricted) which states on pages 3 and 18:

"The first attempt at a theory of [surface burst] radioflash was by [T. S.] Popham, in 1954, who suggested that radio signals were due to currents carried by Compton electrons arising from gamma rays produced in the nuclear explosion… Both the period and amplitude of the radio signal would be expected to increase very slightly with yield."

Fig 1b in Taylor's 1959 report gives the EMP electric field from a surface burst the peak field measured at a distance of 300 km:

-28.1 v/m (this minus sign implies the negative direction, i.e. a vertical upwards Compton current and opposite "conventional current" due to Benjamin Franklin's convention that current is defined as the flow of positive, not negative, charge) at a time of 5 microseconds. Zero field is at 17.2 microseconds. Peak positive is at 23 microseconds with 15.4 v/m and second zero is at 42.5 microseconds. Second negative is at 54 microseconds with about -3.75 v/m.

This EMP data from nuclear tests on unobstructed deserts or the Pacific ocean is totally misleading for surface bursts in urban environments, where the city buildings interfere with both the prompt gamma ray EMP mechanism itself and then attenuate (like attenuation of line-of-sight UHF signals) the (minimal) EMP signal produced! Thus, ground-level EMP sensors will not be likely to give a useful waveform to determine the EMP characteristics of a nuclear detonation in an urban environment, and satellites will be little use because surface bursts radiate most of their energy horizontally, and the small amount radiated in (slant) upward directions will be at frequencies that are severely if not completely attenuated/reflected by the earth's ionosphere before getting anywhere near a detection satellite. Collecting fallout samples will not help determine the yield much in a terrorist attack, either, because radiochemical analysis only gives the ratio of fission products to unfissioned fissile material (including material which has captured neutrons in non-fission reactions).

In nuclear tests, radiochemical analysis determined fission yield because the people doing the analysis knew exactly how much fissile material was in the bomb in the first place. So multiplying the ratio of fissioned material to fissioned plus unfissioned material in a fallout sample by the mass of the fissile material originally present in the bomb gave the total fission yield. Without knowing the amount of material originally present in the bomb, fallout samples only tell you the efficiency of the bomb, not the total yield. You would have to resort to accurate total yield determination methods used by Penney and others in Japan, such as damage caused to simple structures like blast-bent flagpoles, plus an area integral of the measured 1 hour reference time dose rate fallout pattern, which would give a good idea of the fission yield.

Secrecy of nuclear weapons capabilities: new information about updates to EM-1, Capabilities of Nuclear Weapons

On the cover of last month's (March 2013, vol 3, issue 1) Defense Threat Reduction Information and Analysis Centre journal, The Dispatch, DTRIAC Program Manager, Lt Col Craig Hess announces: "This issue of DTRIAC Despatch focusses on Effects Manual One, or EM-1, and I hope it is of interest and of value to you." It certainly is!

The first published, unclassified admission I have found of the existence of the then-secret forerunner to EM-1, Capabilities of Atomic Weapons, TM 23-200, was made by Dr Frank H. Shelton (then Technical Director of AFSWP, the Armed Forces Special Weapons Project charged with compiling and editing Capabilities of Atomic Weapons; Shelton discusses the precursor discovery in the nuclear testing TV/DVD documentary film, Trinity and Beyond) in his testimony to the May-June Hearings before the Special Subcommittee on Radiation, of Joint Committee on Atomic Energy, The Nature of Radioactive Fallout and Its Effects on Man, page 90:

This immediately led to its request by the George R. Stanbury and other British civil defense nuclear weapons testing researchers at the U.K. Home Office Scientific Advisory Branch and the Aldermaston Atomic Weapons research Establishment. As a result, in November 1957 a new edition was prepared which was degraded from Secret - Restricted Data to Confidential, and this was exchanged with Britain in exchange for British testing information. (Britain had been exchanging nuclear weapons testing data with America since 1954, the American FWE or "Foreign Weapons Effects" reports.)

Stanbury uses the data in TM 23-200 in his classified British civil defense reports from 1958 onwards. The problem here is that the whole basis for British civil defense casualty reduction planning was submerged ever deeper into secrecy, so the unclassified publications made assertions which were not backed up by any available published references, and were attacked by both political anti-civil defence media and pro-USSR Marxist critics. This continued after the Home Office got the 1974 NATO edition of EM-1, Capabilities of Nuclear Weapons, which in Table 10-1 "Estimated Casualty Production in Buildings For Three Degrees of Structural Damage", uses the WWII British data for casualties versus the amount brick house destruction. This data was falsely "debunked" in attacks on British handbooks by Marxist Union groupthink Dogma Scientists in the 1980s, who claimed that because the blast duration at a given overpressure increases with the cube-root of weapon yield, it follows that WWII data from small ~0.1 ton TNT bombs on London is inappropriate. Actually, the WWII correlation was never based on casualties versus overpressure for WWII bombings (nobody measured the overpressures in WWII!), but was based on casualties versus degree of damage. This automatically takes account of the blast duration effects. E.g. collapse of a 1-2 story brick house without a Morrison shelter (where people are merely ducking under the staircase or table in WWII resulted in 25% fatalities; this is not fixed to a given, fixed overpressure.

The corresponding overpressure (house collapse) falls with weapon yield, so there is no omission of the blast duration effect: the "critics" simply didn't know the secret facts and are pseudoscientifically guessing how the data was analyzed in the first place, and then attacking their own deluded guess as wrong! It is also important to note that blast duration has no effect below threshold overpressures for damage: if you exert a pressure (force per unit area) which is not enough to deform a wall, then regardless how long you continue to apply that force, the wall doesn't fall down. Dynamic pressure impulse does not determine when a tree falls down. E.g., a 100 mile/hour wind for 1 second duration is equivalent in dynamic pressure impulse to 10 miles/hour for 10 seconds or 1 mile/hour for 100 seconds, but the effects are not the same: no trees fall down if the wind pressure isn't enough to bend them over, no matter how long it lasts! Blast duration is only important for situations where the peak overpressure or peak dynamic pressure is above the threshold needed to cause damage. Increasing the blast duration increases the amount of damage done at high pressures; but it does not reduce the threshold peak pressure which is required for the onset of damage:

Just as important, thermal flash shielding by clothing (whose ignition in Hiroshima was easily rolled out by people who are lying down to avoid the blast), and the low casualties due to the firestorm in Hiroshima are obfuscated in popular propaganda that exaggerates nuclear effects for political dogma that dislikes duck and cover civil defence effectiveness:

The analysis of the Hiroshima thermal flash ignition mechanism at the 1953 Encore nuclear test is still limited in publically unavailable nuclear weapons tests reports WT-774 and WT-775, dealing with interior and exterior thermal flash ignition, respectively. Problem is, the Nevada desert is very dry compared to most modern cities which are built around rivers or near ocean. The secret U.S. Strategic Bombing Survey report 92 volume 2 on the Hiroshima firestorm discloses that a survey of survivors showed that the firestorm was due to blast overturned charcoal braziers in the wooden homes filled with paper screens and bamboo furnishings, and states that the thermal flash only caused black coloured air raid blackout curtains to ignite very close to ground zero; there was no widespread ignition of wooden homes. The Nevada Encore test is being used by various propaganda historians to represent a modern city, when in fact most windows don't see the fireball due to intervening buildings, the infrared component which actually starts fires isn't appreciably scattered (unlike the visible light component, which is often significantly scattered by clouds and dust), and the higher humidity out of a desert means that the transient flaming of newspapers and curtains during the thermal flash has less effect in igniting other materials, which contain moisture due to the humidity in most cities and real homes.

In addition, city buildings often contain fire sprinklers and fire extinguishers, so the few uppermost rooms facing the fireball which suffer thermal ignitions can easily be tackled. Unlike WWII, where the air raid continued for a long time and included explosives, delayed detonation anti-personnel fragmentation bombs, etc., to deter the immediate extinguishing of kilo magnesium incendiary bombs, a nuclear bomb's effects have a definite time sequence imposed by the laws of physics, which means that once the blast wave passes (extinguishing most solid fuel fires exposed to the blast winds above 2 psi peak overpressure, although deep-sided trays of burning liquids in tests were protected from the blast winds and continue to burn) incipient fires can be stamped out before they grow large enough to spread to other objects in a room. The great attacks on civil defense in the 1930s asserted that gas would be used in combination to incendiary bombs, preventing people from sheltering against gas attacks in rooms with closed and sealed doors and windows; this led to the pretty disastrous decision by Anderson to order outdoor shelters (which ended up being rejected by most people as shown by the November 1940 shelter survey, due to cold and damp during repeated Blitz bombings), rather than Morrison type indoor shelters which utilized the house as the first line of protection (absorbing energy by the act of being damaged by blast, like a car's "crumple zone") and simply withstood the weight of falling debris from the house: the force due to the weight of a house is due to its mass and the acceleration due to gravity, both of which are totally independent of bomb size or pressure!

Above: everyone was encouraged to put out incendiaries, and to roll out burning clothing, during the WWII air raids on Britain. This applies even better to nuclear fires, where the fires are not hard-to-extinguish burning magnesium, phosphorus, or napalm, but merely everyday materials like paper, which can be in most cases be easily stamped out, if tackled before fire spread. Anti-civil defense propaganda not only fails to take account in the proper scaling laws in comparing WWII air raids to nuclear explosions, it also tries to claim that somehow the nuclear attack fires are worse. The real WWII incendiary problems occurred during protracted air raids where falling high explosives (some with delay fuses to protract the danger) and fragmentation butterfly bombs were deliberately included in bomb loads, specifically to try to prevent people from easily extinguishing incendiary bombs before they had time to set alight houses! This is a far worse situation than than fire-fighting in a nuclear attack, where the time dependent effects sequence is simple! The worst fire destruction of WWII in London occurred in deserted book warehouses in the Docklands, before firewatching was made compulsory by law. Putting people in outdoor shelters increased the fire threat, because small incipient house fires were able to burn out of control.

Critics of civil defense deplored its cheapness and demanded a "Maginot Line" of expensive deep shelters as being the "only trustworthy safeguard against attack", which is totally crazy. As for the Maginot Line, or Hiroshima (which had plenty of shelters, with nobody in them), the enemy simply has to change the targetting and strike plan to either areas or allies which lack the expensive defenses, or else to use a surprise attack when nobody is in the expensive deep shelters! It's the spur of the moment knowledge about duck and cover that really counts in civil defense. Outdoor shelters in WWII prevented people from remaining indoors and immediately extinguishing fires, so the policy maximised the amount of house destruction. In addition, the incendiary threat had been exaggerated. When there is a real risk of attack, people at the end of the day value life, and in WWII they lost apathy about civil defense, and reduced the fire risk by removing clutter, ensuring adequate buckets of sand/water or other fire extinguishers for firefighting, and this response negated dire assumptions in pre-war fear-mongering "predictions" of weapons effects. All nuclear weapons effects predictions should clearly state what assumptions they use, and should compare the results with and without simple countermeasures!

Above: flying glass is a typical example of an effect of nuclear weapons that is easy to protect against using knowledge and evasive action, both relatively cheap countermeasures that don't have the drawbacks of Maginot Line shelter psychology. Firstly, as this graphs demonstrate, high overpressures mean very small sized glass window fragments, almost a powder, which are mostly superficially penetrating to skin, and are unlikely to penetrate through the abdominal wall to deeper tissue. Obviously, since the outer skin can stop these fragments, so will most types of clothing. To avoid the major problem of glass in the eyes, you can turn away and duck and cover in the relatively long time interval (an average of several seconds over most of the area where duck and cover is needed) between a visible light flash brighter than the sun and the arrival of the blast wave. This is a benefit of nuclear weapons over a similar amount of blast destruction by a large conventional air raid: the flash of nuclear weapons gives advance warning of the blast, provided people are aware and informed of this fact (rather than the usual propaganda by TV media, which lies that the sound accompanies the flash!):

Glasstone's 1962/4 "Effects of Nuclear Weapons" contained a chapter emphasising the time factor "Principles of Protection", which don't exist for conventional weapons where the blast arrives too fast for duck and cover over the area where windows are broken:

Above: the time factor for initial gamma radiation. Note that soldiers who stood up in Nevada trenches after the blast wave passed to get a better view of the rising fireball, got more initial gamma radiation that those who remained lying down. This is partly because of the "hydrodynamic enhancement" which occurs once the compressed shock front passes the observer's location, leaving only low density air (the partial vacuum blast phase) between the fireball and the observer, which increases the gamma radiation transmission rate. Close to the burst, only a small fraction of the initial gamma radiation is received prior to the arrival of the blast wave, so you should remain lying down behind a dense wall or shielding for 20 seconds or so to minimise this dose. Also note that fallout effects like radioactive iodine-131 in milk are predictable and occur on a definite timescale, so contaminated milk can be avoided for a particular period after burst to avoid the threat, without the need to keep taking measurements. The laws of decay for nuclides don't need re-evaluation! There is a lot of propaganda about radiation, all of it based on pure ignorance.

Above: duck and cover not only reduces flying glass and thermal flash exposure, it also reduces the body area exposed to the blast winds and so reduces or eliminates impacts from debris carried by the winds, and translation due to being blown along if standing. Fallout similarly takes time to arrive, is predictable with weather forecasts, so again "sitting duck assumptions" that people will remain outdoors with no protection as in March 1954 on Rongelap Atoll is totally misleading. People thus can take evasive action by sheltering in buildings or leaving the area when the see fallout arrive, which is fused grains of sand unlike blast-lofted dust:

Above: Dr Terry Triffet's testimony to Congress in 1959 make it clear that people can identify fallout particles, distinguishing them from blast-blown dust in a nuclear attack. It's a matter of knowledge of the facts replacing ignorance derived fears. Also note that very low energy gamma ray emitters Np-239 and U-237 in the fallout from bombs with U-238 tampers or fusion capsule pushers makes the mean gamma ray energy decline especially fast in the fractionated close-in fallout (8 miles downwind) from a land surface burst (just 0.25 Mev at 1 week, compared 0.7 Mev in Glasstone and Dolan's book, and 1.25 MeV Co-60 gamma rays which are used to determine protection factors). This means protection is boosted; lower energy gamma rays are shielded more easily.

EM-1 tree blowdown data for road blockage around a 1 kt surface burst nuclear detonation in two types of forest, based on various tests. Note that radial movement (directly towards or away from the explosion) is far easier than movement around the circumference of damage, because trees blown down fall mostly away from the point of the explosion. Therefore, you can more easily walk or drive between tree stems when evacuating or entering a damaged area for rescue purposes, without having to drive over trees. (These graphs are included as an appendix to the unclassified report by Phillip J. Morris, DNA 3054F, AD763750, "Forest Blowdown from Nuclear Airblast.")

-

Update 8 April 2013:

‘The fundamental risk to peace is not the existence of weapons of particular types. ... Aggressors ... start wars because they believe they can gain more by going to war than by remaining at peace.’

- The Iron Lady’s address to the United Nations General Assembly on Disarmament (after pointing out that since Nagasaki, 10 million people had been killed by 140 non-nuclear conflicts), 23 June 1982.

On 29 October 1982, she predicted the fall of the Berlin Wall and the USSR:

‘You may chain a man, but you cannot chain his mind. You may enslave him, but you will not conquer his spirit. In every decade since the war the Soviet leaders have been reminded that their pitiless ideology only survives because it is maintained by force. But the day comes when the anger and frustration of the people is so great that force cannot contain it. Then the edifice cracks: the mortar crumbles ... one day, liberty will dawn on the other side of the wall.’

On 22 November 1990, after a long struggle against USSR aggression with Ronald Reagan, she declared:

‘Today, we have a Europe ... where the threat to our security from the overwhelming conventional forces of the Warsaw Pact has been removed; where the Berlin Wall has been torn down and the Cold War is at an end. These immense changes did not come about by chance. They have been achieved by strength and resolution in defence, and by a refusal ever to be intimidated.’ (Quotations: The Downing Street Years.)

Update (17 May 2013): the political problem of exploiting tragedy to "close down the argument"

The "cognitive dissonance" that the facts on nuclear weapons bring home is simply Orwell's "doublethink". When political ideologues lose an argument, they resort to emotional smears and seek to "close down the argument", a tactic guaranteed to "work" if it happens that they can ban any last word from their opponents from being published or broadcast. This is proved for all time by the ranting and screaming being used to hide rational facts in the current political debate on Britain's USSR/commie type socialist economic debt bomb, costing over £220 billion a year when the government is still borrowing more money each year on top of its record £1 trillion total debt. (The debt is still rising, they are merely making a small reduction in the rate at which it grows, the annual deficit between revenue/income and expenditure.)

This emotional screaming to hide facts is something that civil defense and nuclear weapons deterrence debaters need to understand, because ever since Hiroshima and the Bravo fallout on the Marshallese islanders and Japanese fishermen, emotions have been exploited to "close down" all rational, fact-based discussions on the bomb. The response by James Newman in the Scientific American to Herman Kahn's 1960 book On Thermonuclear War is proof of that.

Leo McKinstry has written a nice discussion of the desperate tactics of political hypocrisy of exploiting emotions to "close down" debates, some extracts are given below:

All political parties in Britain are making a mockery of the whole concept of true democracy, which was originally in ancient Greece a daily referendum "choice" on issues, not an EUSSR-style "choice" between a few rival dictators who then proceed to ignore critics and run things for 4-5 years. The way that emotional ranting and screaming between a few greasy-pole-climbing geniuses replaces rational debate puts me off all political parties.

The mix-up between Marxist-socialist ideals and capitalist economic necessities creates the major financial problem. If you're going to have socialist welfare, you have to do it in a slightly Marxist fashion using hostels for low-cost (to taxpayer) accommodation and maybe collective farms and so on, as the USSR had: you cannot afford to mix-up the two systems and use socialist safety-net ideology to fund Capitalist-style lifestyles. The current system not only demotivates many, it also runs up the national debt. The hypocrisy of the Socialists is precisely the fact that they are really all Capitalists at heart, not Socialists. They want to distribute not equality, but money. That's why they're Capitalists of the worst sort, Capitalists disguised in the cloak of Marxism.

McKinstry states: "The Left knows that it has lost the argument ... so in place of rational discussion it resorts to emotional blackmail and bullying. ... politicians and their pressure group allies now indulge in desperate smear tactics by trumpeting individual cases ... Balls claimed that the Chancellor's 'calculated decision' to use this individual case was 'nasty and divisive and demeans his office.' Yet oblivious to the grosteque double standards Balls is now eagerly ... exploiting personal tragedies ..."

This exploitation of personal tragedy is precisely what happens in nuclear radiation controversy, nuclear weapons effects discussions of Hiroshima, and fallout effects on the Marshallese and Japanese fishermen.

You don't find individual cases of conventional war being used by bankrupt pacifist arguments against war to "close down" the argument in their favour: Stalin murdered 40 million by starvation and Hitler 6 million in concentration camps, without technological "weapons". These millions upon millions killed by disease in a freezing concentration camp. It's not necessary to use weapons to kill millions: the worst "weapons" in human history are political policies, not weapons. Banning nerve gas or nuclear weapons can never stop this evil, which is not based on technology. It's not technology that is evil, it's unopposed pseudoscience like eugenics, Marxism, and other ideologies that provide a fig leaf for bigoted dictatorships. This is pacifist pseudoscience, and it is morally wrong. You're either for evil or against it.

The emotional rants "banning" nerve gas and nuclear weapons haven't prevented the Syrian regime apparently using nerve gas like Aum Shinrikyo in 1995, or the North Koreans from testing nuclear weapons. There is no such thing as an effective "ban" in the real world. The ban by itself is, at best, is a cosy delusion. At worst, it fosters an atmosphere which encourages violation. If you "ban crime" and then feel free and safe to leave your door unlocked, you may be encouraging an increase in crime rather than stopping it. What counts is the enforcement. Banning drugs and laws don't prevent drug smuggling, banning alcohol didn't work in the prohibition era, etc. Banning rearmament didn't do a thing to stop Hitler rearming in the 1930s. Laws and bans will be broken by law-breakers. Banning the Syrians from using nerve gas is akin to the "banning" of Hitler's regime from rearming illegally in the 1930s.

Law-breakers of course are precisely the people laws and bans are ineffective against, while those who try to abide regulations for the most part are not the real problem. This is why we need a police force, not merely "laws" and "bans". This is a fact ignored by ideologues (ideology fanatics) who wish to believe that removing offensive weapons from democracies (that agree) will make the world safe. This is like outlawing crime to abolish the need for a police force! Laws should be concerned more with reducing risks, than with blind obedience to the "authority" of officialdom, which is a dictatorial coercion tactic used by terrorist regimes, not real democracies. People in free democracies must be given the hard factual evidence that justifies and supports laws, i.e. reasoning rather than just dictatorship from a secret elite.

CIVIL DEFENCE AND WINSTON CHURCHILL'S 4 JUNE 1945 WARNING OF SOCIALIST DICTATORSHIPS IN PEACETIME BRITAIN

The key problem is not scientific data, but the political groupthink ideology which asserts in a moralistic, dogmatic sneering manner: "weapons are the cause of war, and civil defence stands in the way of utopian surrender to every dictatorship which acquires weapons whose effects have been exaggerated and turned into a mythical-type Golem; survival tactics against nuclear or any other weapons are immoral, because they decrease suffering in war and thus make war fighting a more realistic alternative to surrender than would otherwise be the case; scientific evidence on the exaggeration of nuclear weapons effects must be ignored (head in the sand policy) as if it is immoral war-mongering, since the only aspect of war to be feared is its deterrence with technological weapons; we must not allow facts and truth to distract us from our Ivory Tower moralistic/ethical preaching that peace and utopia will come in a guaranteed way through socialist equality and disarmament (despite all evidence to the contrary, despite the failure of USSR dictatorship...)".

The background to this weird dogmatic madness is as follows:

"But when economic power is centralized as an instrument of political power it creates a degree of dependence scarcely distinguishable from slavery."

- Friedrich von Hayek (1899–1992), The Road to Serfdom, "Planning and Power."

(THIS IS THE REASON FOR CHURCHILL’S WARINESS OF ATTLEE’S PLANS FOR EXTENSIVE NATIONALIZATION AND EXPANSION OF STATE CONTROL.)

(1) Civil defence, not Marxism, introduced state social security (basic socialism), including nationalised wartime industries, and a forerunner to the national health service which was organized to deal with not only widespread air raid casualties but also over a million evacuees) during WWII. (Clement Attlee, by contrast to civil defence, had been a pacifist as Labour Leader in 1935, and had refused to endorse even modest rearmament proposals and civil defence precautions at that crucial time, when Hitler's remilitarization could have been stopped without world war.) Churchill accepted the most important and economical findings of Beveridge welfare report in 1942, and planned comprehensive education from 1944.

But Churchill had to support Joseph Stalin's USSR as a wartime ally after Hitler invaded the USSR (both the USSR and the Nazis, by a secret mutual pact, had invaded Poland from opposite sides in 1939). This allowed the socialist supporting British media to produce endless pro-USSR propaganda, a backdrop which was more important to Labour's Clement Attlee securing victory in the 1945 general election, than Churchill's heavy-handed attack on socialist dictatorship. Labour won in 1945 because Churchill was associated with war, which people were tired of (to put it mildly), and wanted a change. In addition, socialism seemed nice because it helped deal with social problems during the war, and the socialist USSR was a wartime ally (after being invaded by the Nazis), causing loads of pro-USSR, pro-socialist hype.

(2) Churchill's 4 June 1945 radio broadcast warning of socialist brainwashing groupthink was based on

Friedrich Hayek's 1944 book, The Road to Serfdom (full version here), which was the key influence behind Thatcher, and is a knife twisted into Marxist socialism. Hayek's book backs up Churchill's speech! No word from Attlee to deny this! The problem was that Churchill said of Attlee's socialist government: "They would have to fall back on some form of Gestapo, no doubt very humanely directed in the first instance." The word Gestapo was seized out of context of the rest of Churchill's speech, because in 1945 socialism seemed a far cry from the Gestapo.

It was prior to the discovery of the mass graves from Stalin's Katryn Forest Massacre of polish officers, it was prior to Churchill's "Iron Curtain" warning about the whole of Eastern Europe falling simply from Nazi tyranny to USSR dictatorship, and it was prior to the 1948 Berlin airlift when Stalin tried to use starvation to bring about submission. In other words, on 4 June 1945 Churchill was a prophet ahead of his time and his warning was ridiculed by Mr Clement Attlee just as his warnings of Hitler in 1935 had been ridiculed by Mr Clement Attlee.

Churchill's warning (4 June 1945):

"My friends … Socialism is inseparably interwoven with Totalitarianism and the abject worship of the State. … Look how even today they hunger for controls of every kind, as if these were delectable foods instead of wartime inflictions and monstrosities. There is to be one State to which all are to be obedient in every act of their lives. The State is to be the arch-employer, the arch-planner, and arch-administrator and ruler and the arch-caucus-boss. … A Socialist State once thoroughly completed in all its details and its aspects—and that is what I am speaking of—could not afford to suffer opposition. … Socialism is, in its essence, an attack not only upon British enterprise, but upon the right of the ordinary man or woman to breathe freely without having a harsh, clumsy, tyrannical hand clapped across their mouths and nostrils. ... no Socialist system can be established without a political police. Many of those who are advocating Socialism or voting Socialist today will be horrified at this idea. That is because they are short-sighted, that is because they do not see where their theories are leading them.

"No Socialist Government conducting the entire life and industry of the country could afford to allow free, sharp, and violently worded expressions of public discontent. They would have to fall back on some form of Gestapo, no doubt very humanely directed in the first instance. And this would nip opinion in the bud; it would stop criticism as it reared its head, and it would gather all the power to the supreme party and the party leaders, rising like stately pinnacles over their vast bureaucracies of Civil servants, no longer servants, and no longer civil. And where would the ordinary simple folk—the common people, as they like to call them in America—where would they be, once this mighty organism had got them in its grip?"

This is not an absurdity, it is the reality of politics today, when emotional screaming and hysteria, reflexive blind hatred, ridicule and contempt, worship of conventionality and subjectivity have replaced objectivity. Even in June 1945, Clement Attlee could only reply with a patronising sneer at Churchill’s bombastic style of argument, and would not lower himself from his high horse to address Churchill argument itself: